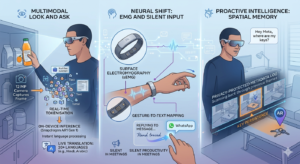

At the heart of these glasses is a Multimodal Large Language Model (MLLM) optimised for the Snapdragon AR1 Gen 1 processor. When you prompt the device to “look and tell me”, a specialised semantic segmentation algorithm takes over.

- Real-Time Tokenisation: The 12 MP camera captures a frame and immediately breaks it into mathematical “tokens”. It identifies objects, reads text, and assesses context (like recognising a specific landmark or a nutritional label).

- On-Device Inference: To keep the interaction fluid, the initial “understanding” of the image happens on the hardware itself rather than waiting for a round-trip to the cloud. This reduces latency to milliseconds, making features like Live Translation (now supporting 20+ languages, including Hindi and Arabic) feel like a natural conversation.

The Neural Shift: EMG and Silent Input

The most significant technical breakthrough this year is Neural Handwriting, enabled by the Meta Neural Band.

- Surface Electromyography (sEMG): Instead of using power-hungry cameras to watch your hands, the wristband’s sensors detect the electrical pulses sent from your brain to your muscles.

- Gesture-to-Text Mapping: A machine-learning model interprets these subtle “micro-gestures”. You can trace letters on your thigh or a desk, and the algorithm translates those muscle twitches into digital text for a WhatsApp reply, allowing for completely silent productivity in a meeting or a quiet room.

Proactive Intelligence: Spatial Memory

In 2026, the glasses have shifted from reactive to agentic. They now employ a spatial memory algorithm that maintains a privacy-protected, low-power metadata log of your environment. This is what allows for “object persistence”—you can ask, “Hey Meta, where did I leave my keys?” and the AI will scan its recent visual history to provide the exact timestamp and location where it last saw them.